A technology race with no agreed rules, no neutral referee, and consequences that extend far beyond the laboratories where it is being run: this is the reality of artificial intelligence in 2025. Across continents, capability gains are accelerating even as the governance structures meant to contain their risks remain fragmented, underfunded, and often captured by the very interests they are meant to oversee.

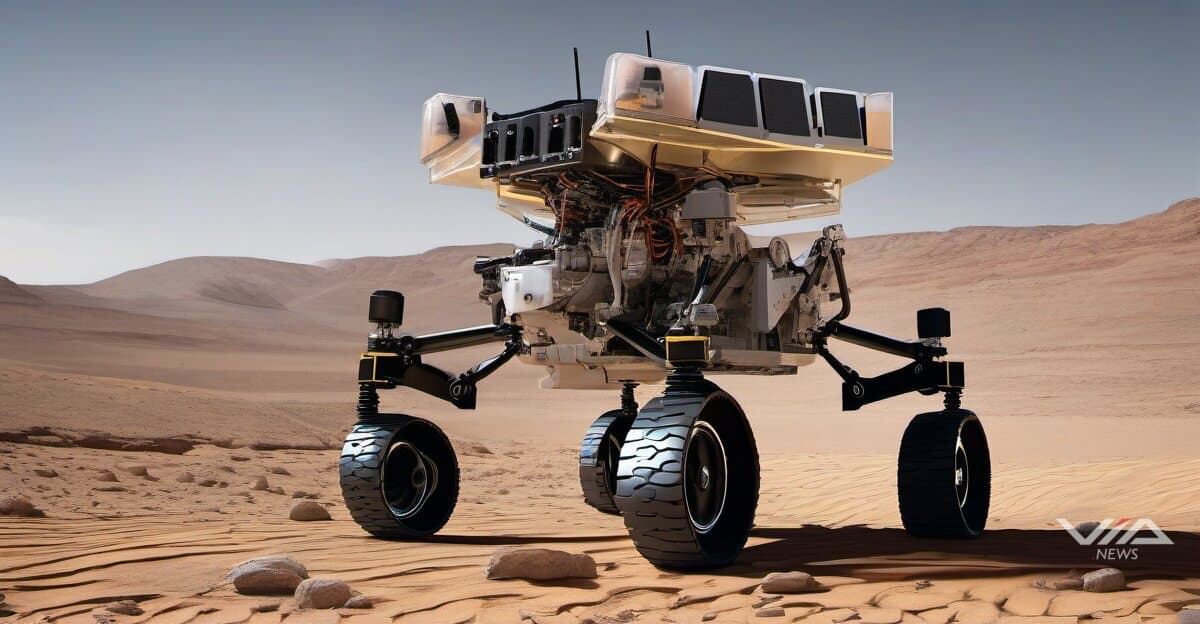

The most dramatic illustration of what autonomous AI now demands of its designers comes not from Earth but from space. NASA's Mars rovers operate in a communication environment where round-trip signal delays can exceed 24 minutes, making real-time human control physically impossible. Onboard AI must interpret terrain, assess risk, and act — alone. These systems are not theoretical: they are functioning, consequence-bearing autonomous agents operating in one of the most unforgiving environments accessible to human technology. They are, in miniature, a preview of the alignment challenge that engineers will face as autonomous systems are deployed in earthbound settings where human oversight is structurally impossible or commercially inconvenient.

That preview is already arriving. Humanoid robotics — long confined to controlled demonstrations — is moving into operational deployment at factories, logistics hubs, and care facilities across the United States, China, Japan, and Germany. The convergence of increasingly capable vision-language models with physical hardware is compressing the timeline from prototype to production. Yet the questions that matter most for public safety cannot be answered by benchmark scores: How do these systems behave at the margins? Who bears liability in Germany when a robot injures a warehouse worker? What legal recourse exists in Japan when an eldercare system fails a vulnerable patient? Regulatory frameworks in most jurisdictions were not written with these scenarios in mind.

The competitive dynamics driving this deployment pressure are themselves shifting. Nvidia's Nemotron 3 Nano has been rated by Artificial Analysis as the most efficient open model among systems of equivalent size — a signal that the performance-per-parameter race, long dominated by raw scale, is entering a new phase driven by architectural ingenuity. China's Moonshot AI, with its Kimi K2 model, and the DAPO reinforcement learning framework emerging from Chinese research institutions reflect a broader pattern: as Western compute restrictions tighten, Chinese AI development is pivoting toward efficiency and algorithmic innovation rather than brute-force scaling. This is not a gap that export controls alone can close.

Resource pressure is concentrating decision-making power in ways that have systemic implications. OpenAI co-founder Greg Brockman has publicly acknowledged the core arithmetic his company faces: every unit of compute allocated to serving hundreds of millions of ChatGPT users globally is a unit not available to train the next generation of models. The company recently raised $100 billion at a reported valuation of $800 billion — while remaining unprofitable — a financial structure that imports Silicon Valley's characteristic high-stakes optimism while deferring its reckoning. Similar dynamics are visible at Anthropic, Google DeepMind, and Mistral, each navigating the tension between commercial deployment and frontier research under investor timelines that have little patience for caution.

Talent, meanwhile, is concentrating alongside capital. The movement of senior engineers between a small number of frontier labs — exemplified by figures like Peter Steinberger's arrival at OpenAI — is narrowing the effective competitive field even as open-weight models from Meta, Mistral, and a growing number of Chinese institutions attempt to redistribute capability more broadly. The European Union's AI Act, however ambitious its intent, was not designed to address a landscape in which the most consequential decisions are made by a handful of private entities headquartered primarily in two countries.

The governance tensions this creates are hardening into concrete controversies. Allegations that Google suppressed AI-generated medical safety warnings — if substantiated — would represent a case study in the accountability vacuum that emerges when AI systems are embedded in high-stakes domains without adequate transparency requirements. The European Medicines Agency and national health regulators from the UK's MHRA to Brazil's ANVISA have yet to develop coherent frameworks for auditing AI-assisted medical tools deployed at scale. The gap between regulatory ambition and technical reality is not a temporary condition; it is a structural feature of a field that is advancing faster than democratic institutions can deliberate.

Voice cloning litigation — cases in which synthetic reproductions of individuals' voices have been generated without consent — is moving through courts in the United States and, increasingly, in the European Union under GDPR provisions that were written before generative audio became commercially viable. The outcomes of these cases will establish precedents that reach far beyond any individual plaintiff, touching questions of identity, consent, and the legal personhood of synthetic representations that no legislature has yet directly addressed.

What unites these disparate developments — autonomous navigation, humanoid deployment, efficiency competition, resource concentration, governance failure — is a single underlying dynamic: capability is advancing faster than accountability, and the costs of that gap are not being borne equally. The engineers and investors who benefit most from accelerating deployment are not the hospital patients, warehouse workers, or ordinary users who absorb most of the risk when systems fail. Bridging that asymmetry will require not just better regulation but a different conception of whose interests AI development is meant to serve — a conversation that is only beginning, and that no single nation can have alone.

Sources:

1 Globe Newswire, "Macaron AI's Mind Lab Sets New Benchmark with Trillion Parameter RL at 10% Cost, Now Integrated Into" (December 08, 2025)

2 Yahoo Finance, "NVIDIA Debuts Nemotron 3 Family of Open Models" (December 15, 2025)

3 Nasdaq, "OpenAI Wants Another $100 Billion" (December 30, 2025)

4 News Report, "The Download: the rise of luxury car theft, and fighting antimicrobial resistance"

5 News Report, "The Download: unraveling a death threat mystery, and AI voice recreation for musicians"